Networking using Services

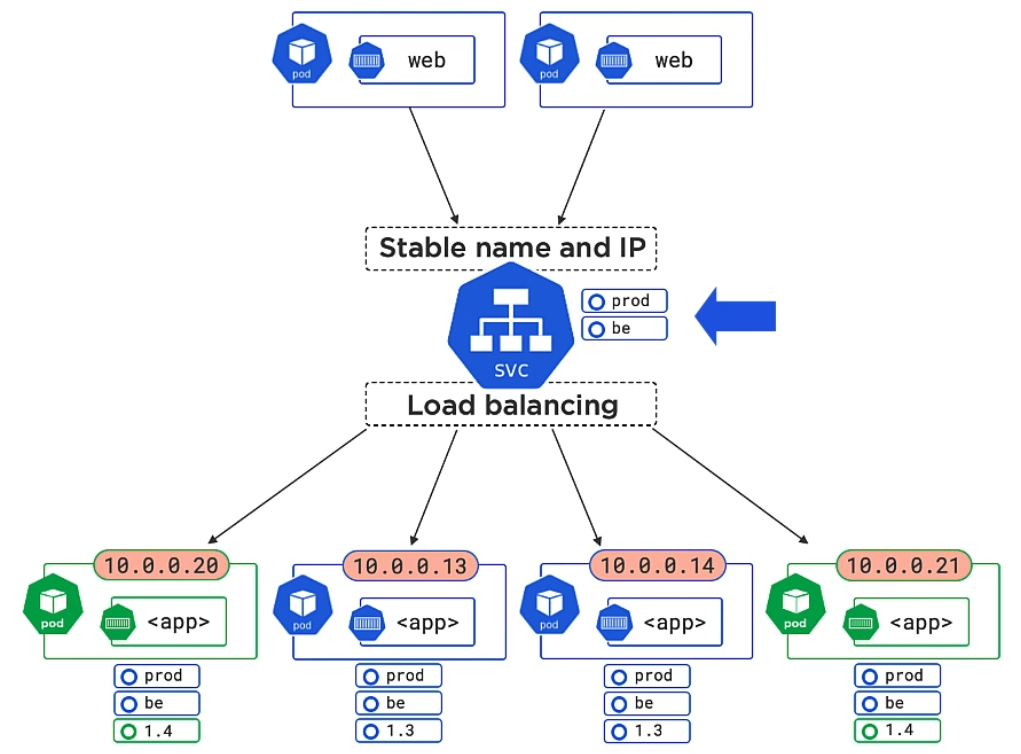

Each Pod has its own IP address. Containers within a Pod can communicate with each other via their ports over localhost. But how does one Pod communicate with another? We cannot rely on a Pod’s IP address because they get dynamically created or destroyed along with the Pod. The solution: We use a Kubernetes Service which sits in front of a group of Pods and which will give us a stable DNS name and IP address that never change, no matter if more Pods are created or destroyed.

How does a Service know which Pods it should service? Answer: It looks for any Pods having at least (!) the same Labels defined as the Service itself.

In the image above, the Service additionally serves Pods labeled as “1.3” and “1.4”, meaning both versions are load balanced. In a next step you could add label “1.4” to the Service to kick the “1.3” Pods out.

Nice to know: Services only send traffic to healthy Pods. They can do session affinity, send traffic to endpoints outside the cluster, and can do TCP (default) and UDP.

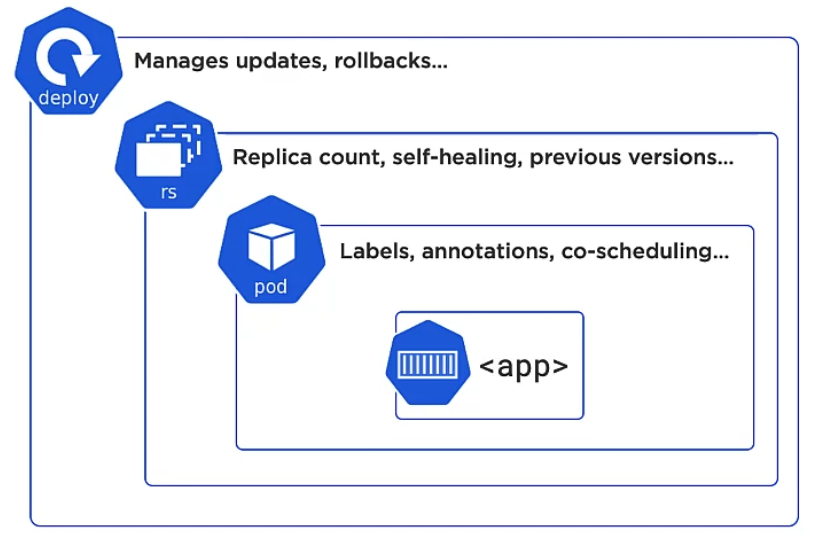

Pods don’t self-heal. We have to rely on higher ordered layers such as rs (replica set) and deploy.

Managing Services

You can create Services declaratively (preferred) via YAML file or imperatively via kubectl commands.

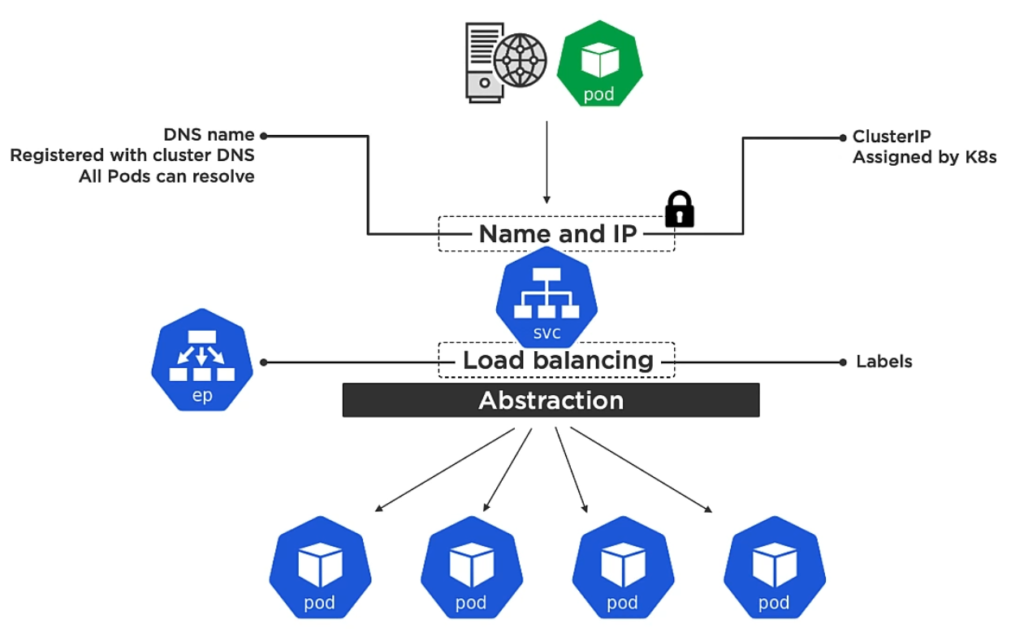

Declarative: You define services via a YAML file and connect Services to Pods by defining the same label for the Service and Pods. A Service is a REST object of the Kubernetes API. Together with creating a service, Kubernetes is additionally creating an Endpoint object which contains a list of all the healthy pods which match the service Label.

Service communication

When accessing Pods we have to distinguish between accessing the service from inside the cluster or externally (e.g. via browser from your laptop).

If you have minikube installed you can use that handy command to output under which url a service is reachable: minikube service <service-name> --url.

Internal communication

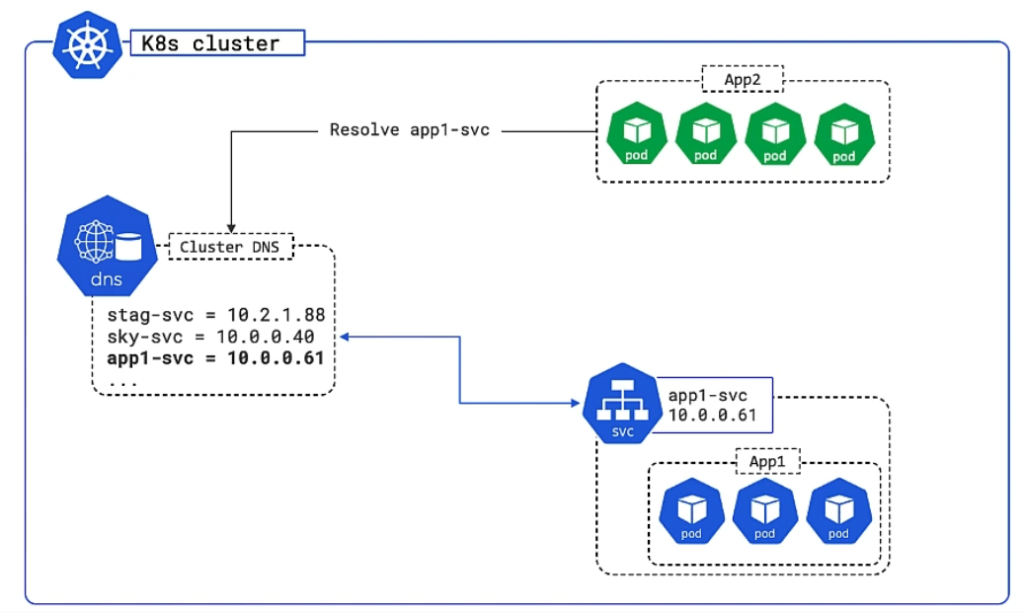

A Service provides a single cluster IP address and the service name is registered with the cluster’s DNS table. Every cluster gets such an internal DNS server called CoreDNS. As a consequence every container and Pod can resolve service names.

In the following image App2 wants to communicate internally with app1-svc, so it first looks up the IP in the DNS table and then uses the resolved IP to communicate:

In the YAML file you would specify type: ClusterIP or leave it out, because it is default:

apiVersion: v1

kind: Service

metadata:

name: nginx # becomes the internal DNS name thus service can be referenced as

labels:

app: nginx

spec:

selector:

app: nginx

ports:

- name: http

port: 80

targetPort: 80

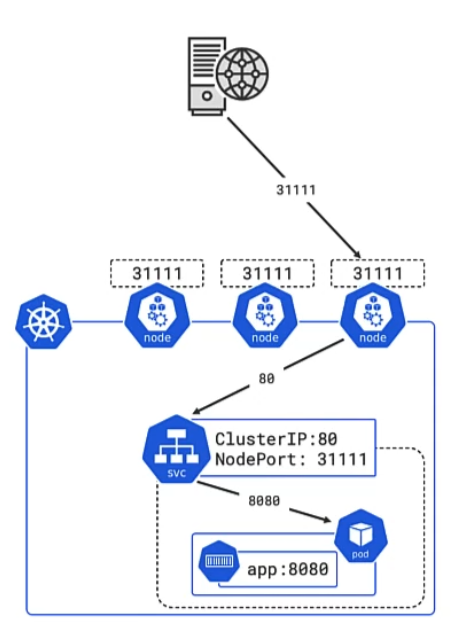

External communication (via NodePort)

Access from outside the cluster can be achieved like this: A service gets a network port, for example app1-svc is available on port 31111. That port can get mapped on every cluster node and point back to the app1-svc IP address. So when a request from outside is done to any of the nodes, the IP can be resolved and the request is forwarded to the Pods behind the service.

Here is an imperative (instead of declarative) way to achieve external access to a Pod. The following command will register the pod hello-pod in the DNS as hello-svc on port 8080:

kubectl expose pod hello-pod --name=hello-svc --target-port=8080 --type=NodePort

And this is how you would define it declaratively:

apiVersion: v1

kind: Service

metadata:

name: nginx # becomes the internal DNS name thus service can be referenced as

labels:

app: nginx

spec:

type: NodePort

selector:

app: nginx

ports:

- name: http

port: 80

targetPort: 80

nodePort: 31000

Now, when you call the cluster IP from your browser with port 31111, then the underlying web server on port 8080 should be displayed.

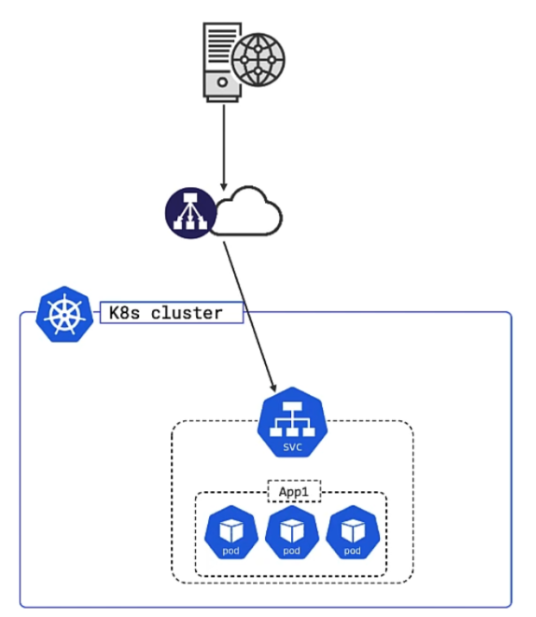

External communication (via LoadBalancer)

Sets up a load balancer that can be reached externally

apiVersion: v1

kind: Service

metadata:

name: nginx # becomes the internal DNS name thus service can be referenced as

labels:

app: nginx

spec:

type: LoadBalancer

selector:

app: nginx

ports:

- name: http

port: 80

targetPort: 80

Apply the service file: kubectl apply -f service.yml.

Get a description of the service with kubectl describe svc ps-lb.

Get a description of the endpoint (list of healthy pods) with kubectl describe ep ps-lb.

ExternalName Service

If your Pods want to reach out to an external service whose IP or DNS name might change frequently, then it is better to let the Pods go through a custom internal service first, that will simply forward anything to the external service. By doing this you only have to adjust that internal service whenever the external service change.

In the following example we want to reach out to an external service hosted at api.acmecorp.com by creating an internal service (that we call external-service, a bit odd, I know) on port 9000 that will proxy everything:

apiVersion: v1

kind: Service

metadata:

name: external-service

spec:

type: ExternalName

externalName: api.acmecorp.com

ports:

- port: 9000

Namespaces and DNS

When you create a Service, it creates a corresponding DNS entry. This entry is of the form <service-name>.<namespace-name>.svc.cluster.local, which means that if a container just uses <service-name>, it will resolve to the service which is local to a namespace. This is useful for using the same configuration across multiple namespaces such as Development, Staging and Production. If you want to reach across namespaces, you need to use the fully qualified domain name (FQDN).